Posted on March 28th, 2011 in Isaac Held's Blog

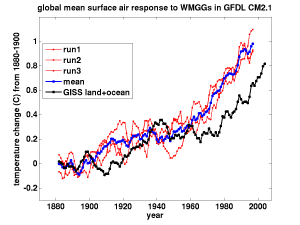

Global mean surface air warming due to well-mixed greenhouse gases in isolation, in 20th century simulations with GFDL’s CM2.1 climate model, smoothed with a 5yr running mean

Global mean surface air warming due to well-mixed greenhouse gases in isolation, in 20th century simulations with GFDL’s CM2.1 climate model, smoothed with a 5yr running mean

“It is likely that increases in greenhouse gas concentrations alone would have caused more warming than observed because volcanic and anthropogenic aerosols have offset some warming that would otherwise have taken place.” (AR4 WG1 Summary for Policymakers).

One way of dividing up the factors that are thought to have played some role in forcing climate change over the 20th century is into 1) the well-mixed greenhouse gases (WMGGs: essentially carbon dioxide, methane, nitrous oxide, and the chlorofluorocarbons) and 2) everything else. The WMGGs are well-mixed in the atmosphere because they are long-lived, so they are often referred to as the long-lived greenhouse gases (LLGGs). Well-mixed in this context means that we can typically describe their atmospheric concentrations well enough, if we are interested in their effect on climate, with one number for each gas. These concentrations are not exactly uniform, of course, and studying the departure from uniformity is one of the keys to understanding sources and sinks.

We know the difference in these concentrations from pre-industrial times to the present from ice cores and modern measurements, we know their radiative properties very well, and they affect the troposphere is similar ways. So it makes sense to lump them together for starters, as one way of cutting through the complexity of multiple forcing agents.

When only the WMGGs are included in simulations of the 20th century, what do models predict? A result from the GFDL CM2.1 model is shown in the figure above. (Among other things, this model prescribes ozone and it also does not include the effects of methane oxidation on stratospheric water — so the effects of WMGGs through their perturbations to stratospheric ozone, and the trend in the methane source of stratospheric water, are not included here.) The identical model is run three times with different conditions taken from a pre-industrial control simulation, so these three realizations produce different details of the chaotic internal variability in the model. The three red lines in the figure are the global mean surface air temperature in these simulations, the blue line is their average, an estimate of the forced response (post #3). The black line is the land-plus-ocean global mean as estimated by the GISS product. In each case, temperatures are plotted as anomalies from the 1880-1900 mean and a 5 year running mean is applied to each time series, removing much of the effects of ENSO variability, in particular. These model runs continued only until the year 2000, so the 5yr running mean ends in 1998.

When forced with the concentrations of WMGGs, this particular model overestimates the warming by about 30%. With the WMGG concentrations used in the model and standard expressions for radiative forcing due to these WMGGs, I estimate that the forcing increased by about 57% of CO2 doubling over the period shown in the figure (1880-2000), and by 65% of doubling if we were to extend to 2009 (using the forcing growth tabulated at this very useful NOAA web site for the years after 2000). Let’s use 60% as a round number for this ratio of the forcing in the recent period, which I will call the “20th century” for short, to the forcing due to doubling of CO2. This model’s equilibrium climate response to doubling of CO2 is about 3.4K. Shouldn’t we expect the warming over the 20th century in this model to be about 0.6 x 3.4K 2K?

No, because the model does not fully equilibrate on the time scale of a century. As already discussed in previous posts, a more useful point of comparison is the transient climate response (TCR), the warming at the time of doubling in a simulation in which CO2 is increased at 1%/year. The model used here has a TCR of about 1.5-1.6K. Rescaling by 0.6 we get about 0.9-1.0K, which evidently explains the model result in the figure to a first approximation.

A convenient archive of results from the world’s climate models for precisely this WMGG-only computation does not exist to my knowledge, but the standard 1%/year simulations used to define model TCR’s are archived by PCMDI. Multiplying by 0.6, you get a median of about 1.1-1.2K for the CMIP3 models, with a range (for 20 models) from 0.8 to 1.7K. This is a little rough, but I think it is a pretty good estimate of what these models would give in a WMGG-only simulation for the 20th century. (As mentioned in previous posts, CM2.1’s TCR is below the median of the CMIP3 models.)

So most models generate trends from the WMGG forcing that are larger than the observed trend in the 20th century. A simple point of reference to keep in mind is that the least sensitive models in the CMIP3/AR4 archive roughly match the observed trend when forced with WMGGs only.

We will need to return to the centrally important question of the amplitude of internal variability, but I just want to point the reader to the figure again to get a sense of the magnitude of the internal variability in this particular model. Note how one of the red lines dips substantially in the 1950’s, for example. Evidently this model can generate internal fluctuations in global mean temperature that produce substantial departures from a smooth warming trend, but it does not come close to generating variability comparable to the 20th century trend itself.

Foregoing a critique of this result for the time being, if we assume that natural variability does not, in fact, confound the century-long trend substantially, the observed warming provides us with a clear choice. Either

- the transient sensitivity to WMGGs of the CMIP3 models is too large, on average, or

- there is significant negative non-WMGG forcing– aerosols being by far the most likely culprit.

Two simple things to look out for when this issue is discussed:

- Watch out for those who estimate the expected 20th century warming due to WMGGs by rescaling equilibrium sensitivity rather than TCR.

- Conversely, watch out of those who compare the observed warming to the model’s response to CO2 only, rather than the sum of all the WMGGs. If we scale our expectations for warming down by the fraction of the WMGG forcing due to CO2, the model results (without aerosol forcing) happen to cluster in a pleasing way around the observed trend, but one cannot justify isolating CO2‘s contribution in this way.

In preparing this post, I was struck, as some readers may have been as well, by the magnitude of the warming early in the century in these WMGG-only simulations. I have been looking into this a bit and will return to it shortly.

[The views expressed on this blog are in no sense official positions of the Geophysical Fluid Dynamics Laboratory, the National Oceanic and Atmospheric Administration, or the Department of Commerce.]

The model results give a hint of mid-century flattening, which is typically attributed to an increase in cooling aerosols, although not as pronounced as in the GISS curve, nor exactly contemporaneous with it. Is this just part of the random variation inherent in the model runs, or can some of the flattening be attributed to changes in WMGGs?

There is some flattening of the CO2 evolution prescribed here (between 1935-1945) which is based on Etheridge et al.

Hi,

So, would this be a reliable-ish indication of what would happen if we stopped burning coal and cleaned up other particulate sources?

Or are their other feedbacks (Clouds?) that would also act against this. Not sure I’m reassured..

Andy

” Watch out for those who estimate the expected 20th century warming due to WMGGs by rescaling equilibrium sensitivity rather than TCR.

Conversely, watch out of those who compare the observed warming to the model’s response to CO2 only, rather than the sum of all the WMGGs. If we scale our expectations for warming down by the fraction of the WMGG forcing due to CO2, the model results (without aerosol forcing) happen to cluster in a pleasing way around the observed trend, but one cannot justify isolating CO2‘s contribution in this way.”

Thanks for that! I’ve seen both of these mistakes made repeatedly

The steep rise from 1900 that arches over getting more gentle to 1940 could be compatible with a significant jump discontinuity (~0.4 W/m^2) in the forcings at 1900. So I look forward to when you return to this.